|

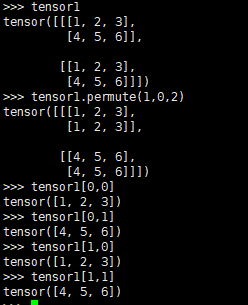

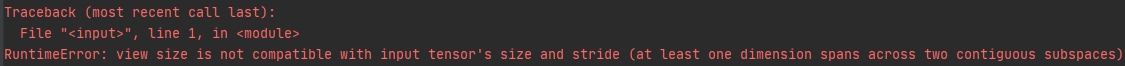

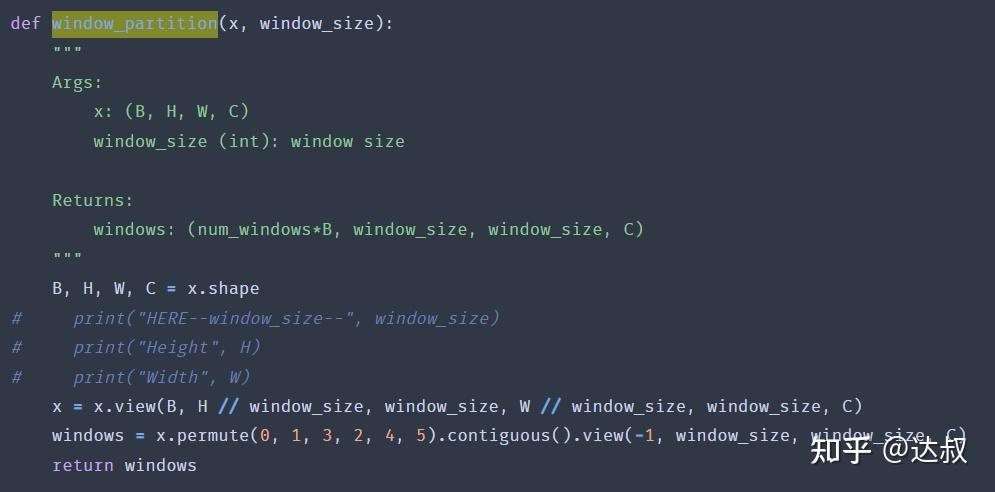

Permute is quite different to view and reshape: # View vs. Wiki Security Insights New issue torch. about the next word predictionvector torch.cat((contextvectors, ht). # Reshape works on non-contugous tensors (contiguous() + view) contiguous viewcontiguousvariableviewtransposepermutecontiguous ()contiguous copy. Seq) We want to iterate over the sequence so we permute it to (S. Have a look at this example to demonstrate this behavior: x = torch.arange(4*10*2).view(4, 10, 2) See () on when it is possible to return a view.Ī single dimension may be -1, in which case it’s inferred from the remaining dimensions and the number of elements in input. Contiguous inputs and inputs with compatible strides can be reshaped without copying, but you should not depend on the copying vs. When possible, the returned tensor will be a view of input. The numbers provided to torch.permute are the indices of the axis in the order you want the new tensor to have. I think maybe the codes in which you found the using of add could have lines that modified the torch.nn.Module.add to a function like this: def addmodule(self,module): self.addmodule(str(len(self) + 1 ), module) torch.nn.Module.add addmodule after doing this, you can add a torch.nn.Module to a Sequential like you posted in the question. Parameters: tensor ( LongTensor) class values of any shape.

Returns a tensor with the same data and number of elements as input, but with the specified shape. Takes LongTensor with index values of shape () and returns a tensor of shape (, numclasses) that have zeros everywhere except where the index of last dimension matches the corresponding value of the input tensor, in which case it will be 1. Plt.subplot(int(bz**0.5),int(np.ceil(bz/int(bz**0.Reshape tries to return a view if possible, otherwise copies to data to a contiguous tensor and returns the view on it. PyTorch torch.permute () rearranges the original tensor according to the desired ordering and returns a new multidimensional rotated tensor. Raise Exception("unsupported type! "+str(img.size())) Raise Exception("unsupported type! " + str(img.size())) Print('warning: more than 3 channels! only channels 0,1,2 are preserved!')Įlif bz > 1 and c = 1: # multiple grayscale imagesĮlif bz > 1 and c = 3: # multiple RGB imagesĮlif bz > 1 and c > 3: # multiple feature maps If bz=1 and c=1: # single grayscale imageĮlif bz=1 and c > 3: # multiple feature maps Show(x,y,z) produces three windows, displaying x, y, z respectively, where x,y,z can be in any form described above. If x is a 2D tensor, it will be shown as grayscale map If x is a 3D tensor, this function shows first 3 channels at most (in RGB format) If x is a 4D tensor (like image batch with the size of b(atch)*c(hannel)*h(eight)*w(eight), this function splits x in batch dimension, showing b subplots in total, where each subplot displays first 3 channels (3*h*w) at most. Show(x) gives the visualization of x, where x should be a torch.Tensor Input imgs can be single or multiple tensor(s), this function uses matplotlib to visualize. I've written a simple function to visualize the pytorch tensor using matplotlib. Join the PyTorch developer community to contribute, learn, and get your questions answered. # If you try to plot image with shape (C, H, W) Learn about PyTorch’s features and capabilities. It doesnt look like I can use flip to switch 0, 1 index. The output of the code is torch.Size ( 512, 256, 3, 3) but I would expect it to be torch.Size ( 256, 512, 3, 3). I am trying to use the permute function to swap the axis of my tensor but for some reason the output is not as expected. Tensor_image = tensor_image.view(tensor_image.shape, tensor_image.shape, tensor_image.shape) Pytorch permute not changing desired index.

Print(type(tensor_image), tensor_image.shape) dim dimension to insert. How is permutation implemented in PyTorch cuda FeiWang1 (Fei Wang) March 5, 2019, 5:32pm 1 Hi, I am interested to find out how PyTorch cuda implement permutations.

tensors (sequence of Tensors) sequence of tensors to concatenate. Here it is: def heatmap (tensor: torch.Tensor) -> torch.Tensor: assert tensor.dim () 2 Were expanding to create one more dimension, for mult. To do that, you can use masks and calculate all in one. But PyTorch Tensors ("Image tensors") are channel first, so to use them with matplotlib you need to reshape it: stack (tensors, dim 0,, out None) Tensor ¶ Concatenates a sequence of tensors along a new dimension. You need to get rid of if s and the for loop and make a vectorized function. As you can see matplotlib works fine even without conversion to numpy array.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed